The Hardest Recommendation Problem in Tech

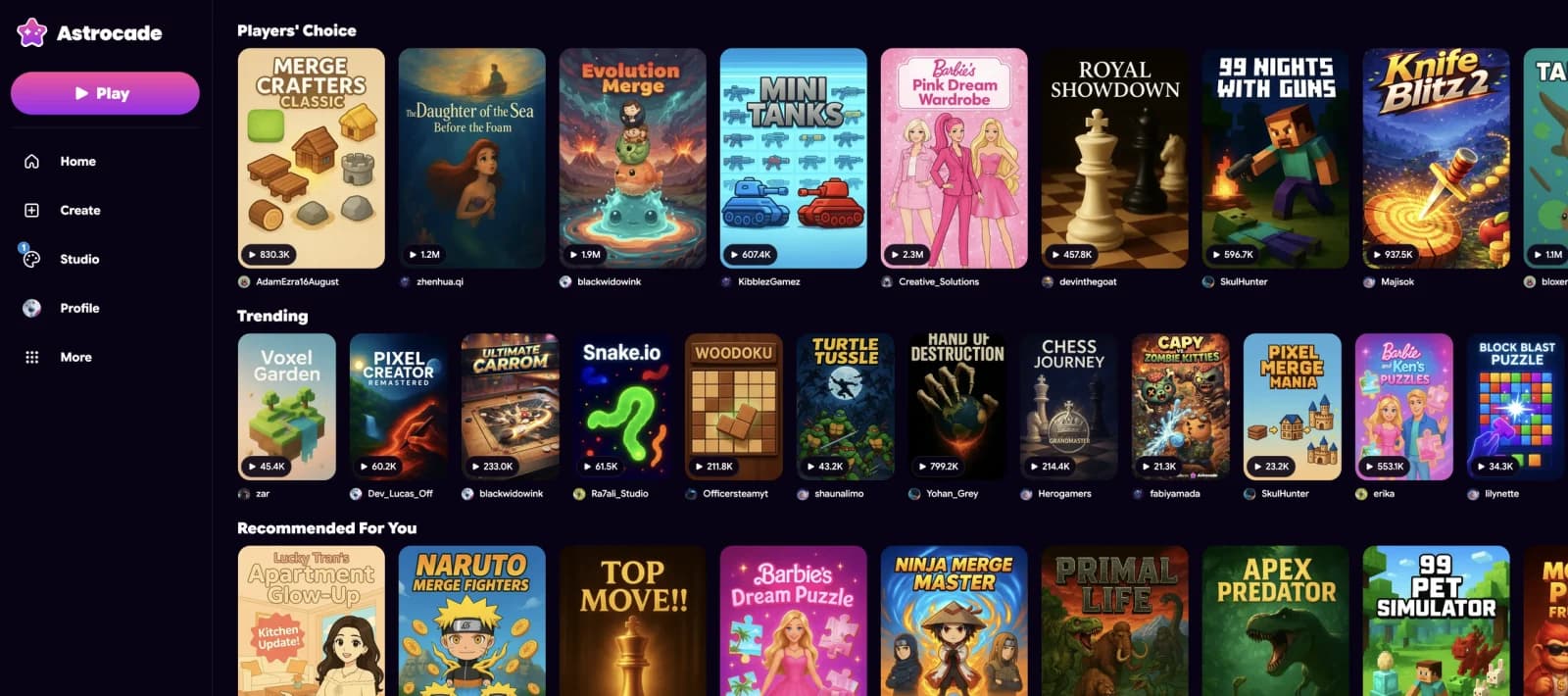

Astrocade

April 21st, 2026

Most recommendation systems were built for linear content: video plays forward, songs have beginnings and endings, and even text is read from top to bottom. The amount of time a user spends consuming this kind of content tends to be a reliable proxy for how much they liked it.

Games, on the other hand, aren't linear. Two players can spend the same amount of time in the same game and have completely opposite experiences, one exploring every corner and chasing down every easter egg, the other getting stuck on the first level in frustration. These might look like identical behavioral signals, despite the experiences they represent being polar opposites. Every behavioral signal, from play duration to return rate to completion, means something different depending on who generated it.

Nuances like these break the foundational assumptions behind most modern recommendation systems. And when the content is also user-generated, constantly evolving, and arriving on your platform faster than any human could review it, you're looking at what I believe is the hardest recommendation problem in tech: one in which consumption can't simply be measured, but must be interpreted.

Did I mention it's user-generated too?

But even this doesn't capture the scope of the challenge we face at Astrocade. If game recommendation is hard, recommending UGC games is harder by an order of magnitude, for three reasons:

- Every game is a cold start. AAA titles ship with marketing budgets, brand recognition, and pre-existing audiences. A game created on Astrocade by a first-time creator has none of that. No trailer, no reviews, no Reddit hype. It consists of a title, a thumbnail, and code. That's it. And we somehow need to figure out who will love it before anyone's played it.

- The evaluation window is brutal. A TikTok gets judged in 1-3 seconds. A Spotify track gets maybe 30 seconds. A game needs 3-5 minutes of active play before a player can fairly evaluate it. And that's not 3-5 minutes of passive consumption, but minutes of cognitive engagement, learning controls, understanding mechanics, and, hopefully, reaching the part where it gets good. Every recommendation carries a much higher cost if it's wrong.

- The content is alive. Creators update their games. A mediocre game today might be great next week after the creator iterates on feedback. A great game might break after an update. Your features decay not just because user preferences shift, but because the underlying content itself is evolving.

The Scale of the Discovery Problem

The broader UGC gaming ecosystem is feeling this acutely. Across platforms like Roblox and Fortnite Creative, published maps more than doubled last year while the number of active creators barely grew. The content flood is real, and everyone in the space is actively experimenting; Roblox recently overhauled their discovery algorithm to incorporate social co-play signals, while Epic launched Sponsored Rows in Fortnite's Discover tab.

The challenge is structural. When your platform's value proposition is "anyone can create," you need a recommendation system that makes "anyone can be discovered" equally true. Otherwise, you end up with a power-law distribution where the top 0.1% of games get all the traffic and the long tail, where most of the creative innovation lives, is invisible.

How We're Approaching It at Astrocade

I won't pretend we've solved it. But we've built something we think is genuinely different, and it starts with a philosophical choice: optimize for depth of engagement, not surface interaction.

Click-through rate is the default metric in most recsys. It's easy to measure, fast to collect, and well-understood. It's also deeply misleading for games. A clickbait thumbnail can drive high CTR to a game that players abandon in seconds. Meanwhile, a niche puzzle game with a plain thumbnail might have lower CTR but keep players engaged for 20 minutes and bring them back the next day.

Our system tracks what we call Significant Play Rate, or the percentage of plays that cross a meaningful engagement threshold. We combine this with a stack of deeper signals, like median play duration, how actively players interact during sessions, or whether they come back the next day. The goal is to capture whether a player genuinely enjoyed an experience, not just whether they were tricked into starting one.

On the other side, we use AI to understand game content directly from code, extracting features like genre, difficulty curve, art style, control scheme, and session loop length. This gives us a richer, content-based signal before any player has touched the game, which is essential when your catalog is entirely user-generated and constantly growing.

This combination of deep behavioral signals for mature games and AI-derived game understanding for new ones feeds into a learned ranking model that gets smarter with every play session on the platform.

Why This Matters Beyond Gaming

Building a recommendation system that works for interactive, user-generated, constantly-evolving content is a significant challenge, but cracking it means solving a much harder version of the problem that every content platform will eventually face.

As AI tools make content creation faster and cheaper across every medium, every platform is headed toward UGC-scale content volumes. The content flood isn't a gaming problem; it's a preview of what happens everywhere when creation becomes trivially easy.

The teams that figure out how to surface quality in that flood, not through curation or paid promotion, but through systems that genuinely understand what makes content good for a specific person, will define the next generation of content platforms.

We're building the system we wish existed when we started. And if we get it right, we won't just have solved game discovery. We'll have built the playbook for how recommendation works in a world where everyone is a creator.